Designing AI for Human Oversight, Not Automation

Why the most effective AI systems are built to support judgement, not replace it.

For much of the past decade, AI has been framed as an automation technology. The promise was efficiency: fewer manual processes, faster decisions, reduced human intervention.

But as AI systems move into real-world settings, particularly across heritage, creative industries, and public-sector workflows, a more nuanced understanding is emerging.

The most resilient and trusted AI systems are not those that eliminate human involvement. They are those designed explicitly for human oversight.

Automation removes people from the loop.

Oversight keeps people at the centre.

The Limits of Full Automation

In tightly bounded industrial processes, automation can work well. But in environments shaped by context, interpretation, and public trust, full automation carries significant risk.

Consider:

Heritage reconstruction that fills historical gaps without curatorial review

Automated content generation that misrepresents cultural nuance

Risk assessments in public infrastructure made without human validation

Classification systems that silently embed bias

In these settings, removing human judgement can undermine credibility and introduce subtle but significant error.

AI systems excel at pattern recognition and repetition. They struggle with ambiguity, contested interpretation, and ethical sensitivity, precisely the areas where human expertise is essential.

What Human-in-the-Loop Actually Means

Human-in-the-loop (HITL) is often misunderstood as a last-minute check. In practice, it is a design philosophy.

Effective oversight involves:

Escalation thresholds: Automatically flagging uncertain outputs for human review

Confidence visibility: Showing where the model is less certain

Editable outputs: Allowing experts to adjust or annotate results

Feedback loops: Using human corrections to improve future performance

Clear responsibility: Assigning ownership of final decisions

This structure ensures that AI enhances expertise rather than bypassing it.

Applications Across Sectors

Heritage and Cultural Reconstruction

AI can assist in reconstructing artefacts or buildings, but curators must determine what is historically defensible and what remains speculative. A layered approach, separating evidence from inference, protects scholarly integrity.

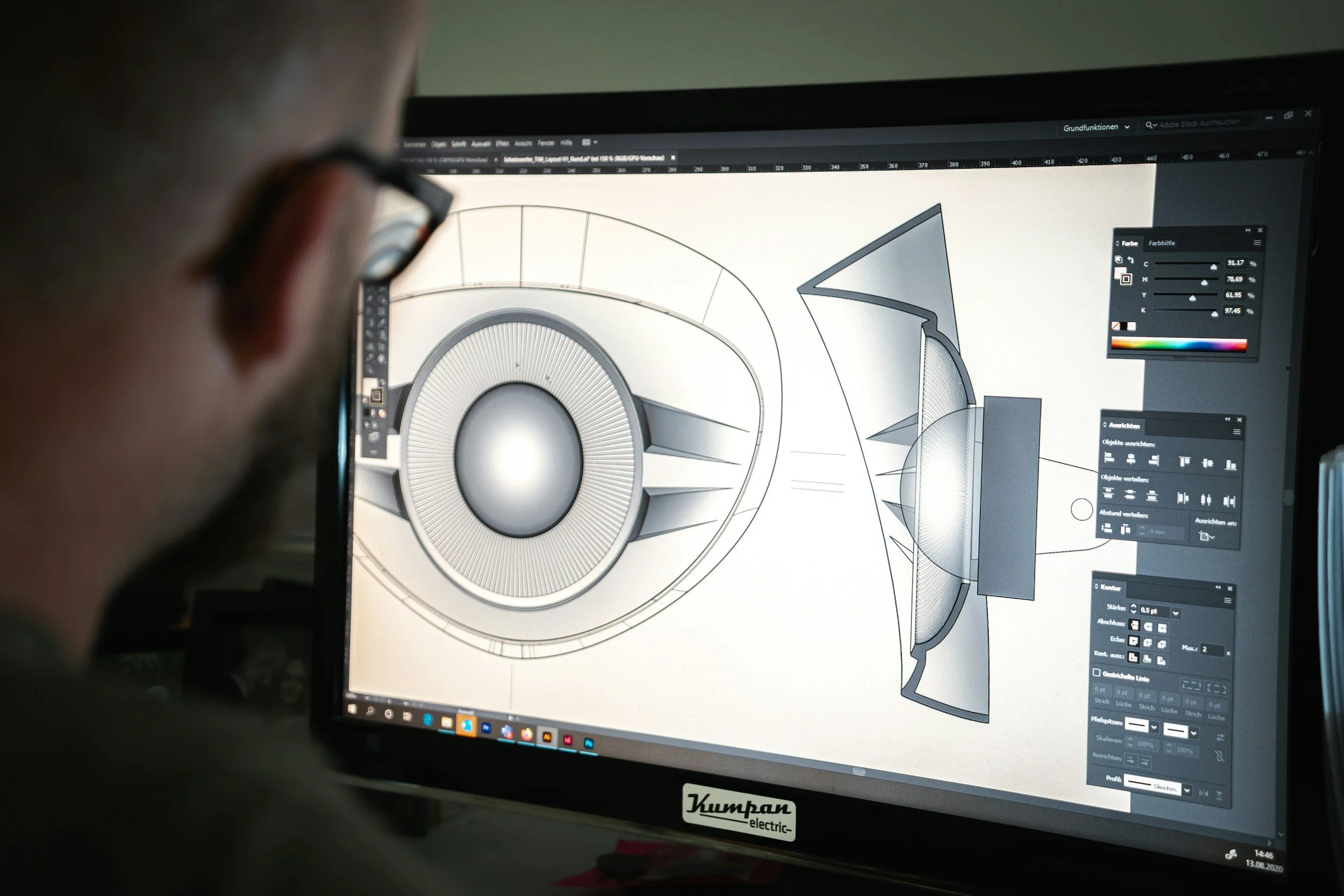

Creative Workflows

Generative tools can accelerate drafts, layouts, or early-stage ideas. Designers and artists refine, contextualise, and shape outputs. Oversight preserves authorship and coherence.

Public Sector and Risk Assessment

AI can analyse patterns across datasets, but human reviewers interpret implications, consider community impact, and apply discretion.

In each case, AI acts as a collaborator, not an authority.

Why Oversight Builds Trust

As AI systems become more visible, public expectations are rising.

Communities increasingly expect:

Transparent decision-making

Clear explanation of automated support tools

The ability to question or challenge outputs

Assurance that humans remain accountable

Systems designed around oversight are easier to audit, easier to explain, and easier to defend.

They also reduce organisational risk. When decisions are documented and human-reviewed, accountability is preserved.

Designing for Oversight from the Start

Retrofitting oversight into a fully automated system is difficult. It must be built in from the beginning.

Key design principles include:

Treat AI outputs as advisory unless explicitly validated

Make uncertainty explicit rather than hidden

Log both machine outputs and human edits

Provide simple mechanisms for override

Train staff not only to use AI, but to question it

This approach aligns particularly well with hybrid systems, where machine learning operates within explicit models or rule-based structures. By constraining outputs within known boundaries, organisations make oversight manageable and meaningful.

What This Means for SMEs and Cultural Organisations

For smaller organisations, the goal is not maximum automation, it is maximum confidence.

Human-in-the-loop systems:

Protect institutional credibility

Preserve specialist expertise

Allow gradual adoption rather than disruptive overhaul

Support skills development rather than redundancy

Oversight is not a weakness. It is a strategic strength.

Final Thought

AI is often marketed as a substitute for human effort. In practice, its greatest value lies in amplifying human capability.

Designing AI for oversight rather than automation acknowledges both the power and the limits of the technology. It keeps judgement visible, responsibility clear, and trust intact.

The future of applied AI is not autonomous, it is collaborative.